Early Safety 1 theories dating back to the 1930s have shaped how we think about safety and accidents.

- Heinrich’s Pyramid

- Heinrich’s Domino Model

- Reason’s Swiss Cheese Model

- Dynamic Safety Model

- Perrow’s Normal Accident Theory

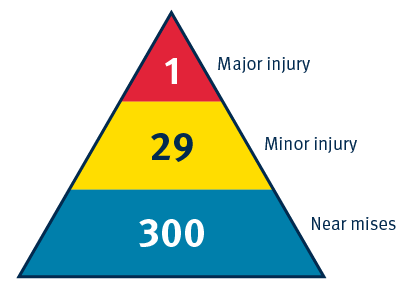

Heinrich's Safety Triangle – 1930s

Impact on safety thinking – ‘Blame the human’

Herbert Heinrich’s safety triangle significantly influenced safety thinking. The theory focuses on human action as the cause of accidents, diverting attention away from the systems.

Theory:

-

There was a ratio between minor incidents and major accidents.

-

Eliminating minor incidents would also eliminate major accidents.

-

Majority of accidents were the result of unsafe acts or errors by workers.

Limitations

-

Linear approach to safety thinking.

-

Focuses on counting incidents, rather than understanding and addressing safety risks.

-

Overemphasis on worker behaviour and human error with minimal regard for system contributions.

Considerations:

-

Relies on a simplified view of accident causality.

-

Designed in the 1930s. Caution is needed when applying to highly complex work environments in healthcare.

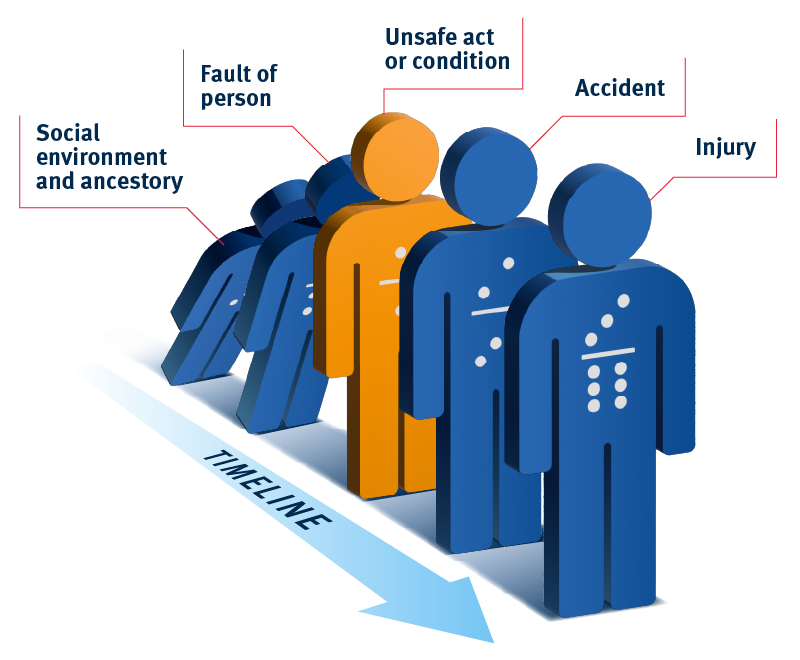

Heinrich’s Domino Model of Accident Causation – 1930s

Impact on safety thinking – ‘Blame the human’

Following his pyramid, Heinrich’s domino describes accidents occurring due to a causal chain of events (dominos). It introduces the concept of a linear ‘energy’ or sequence of events that leads to an accident. Removing one of the dominos can thus prevent the downstream accident/injury from occurring.

Impact on safety thinking:

-

Accidents now seen as the result of a ‘negative energy’ that could be interrupted to prevent accidents.

-

Incidents seen as result of linear sequence of events.

-

Remained strongly focused on the human as the primary cause of incidents: “Fix the human and the problem will go away”.

Considerations:

-

While Heinrich’s domino theory offers some structure to understanding accidents (and trying to learn from them), it led to over-simplistic understandings about why things go wrong.

-

This can lead to linear concepts of causality which is not appropriate in complex systems that tend to ‘fail in complex ways’.

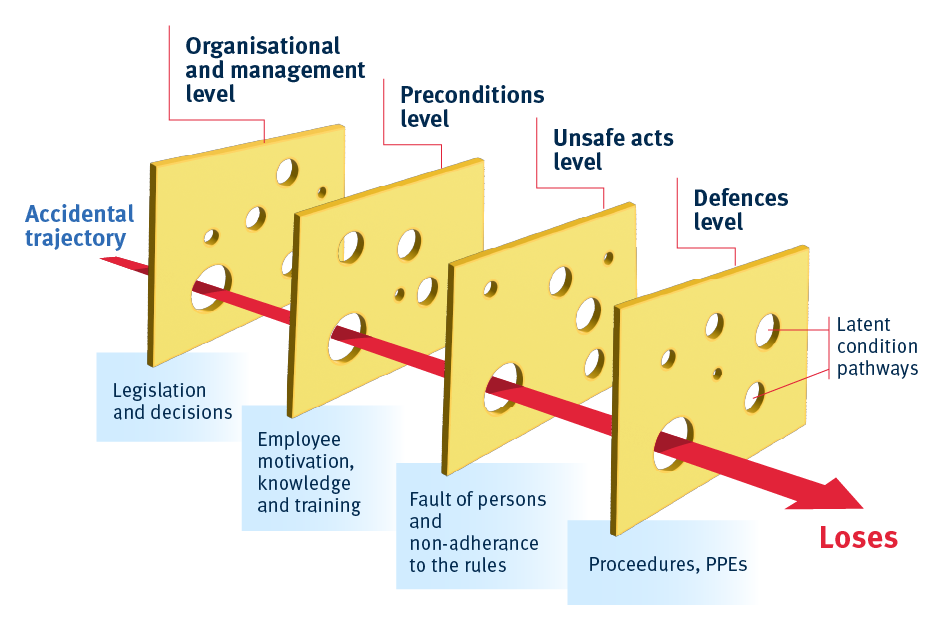

Reason’s Swiss Cheese Model – 1990s

Perhaps the most well-known model in healthcare is James Reason’s Swiss Cheese Model. Despite its similarities to Heinrich’s dominos, Reason's model looked beyond the human incorporating a focus on systemic drivers of incidents.

The model of accident causation was based on the following premises:

-

Layers of defence: Organisations have multiple layers of defence (slices of Swiss cheese) to prevent hazards from causing harm

-

Holes in the layers: Each layer has weaknesses/potential failures (holes) that are dynamic. That is they can change in size and position.

-

Latent conditions (pathogens): These are hidden problems within a system that can create holes in the layers of defence.

-

Alignment of holes: An accident occurs when the holes in each layer line up allowing a hazard to pass through all defences.

-

Active failures: The direct actions or errors of individuals that can create or align holes.

Impact on safety thinking:

-

Perpetuated the concept of linear causality / accident trajectory.

-

Strengthens belief that more defences can improve safety (this may not be correct).

-

Introduced concept of dynamic and ‘latent errors’ inherent in procedures, machine or system.

-

It’s simplicity contributed to its rapid dogmatisation, especially in healthcare.

Considerations:

-

Reason was the first critic of his model – its rapid dogmatisation led to his concerns it was being used to broad and widely.

-

Notably healthcare was one of the culprits in this rapid dogmatisation, being published in the era that the Patient Safety movement was standing up.

-

Its ‘defences in depth’ message saw it used heavily in the Covid-19 pandemic.

-

Reason eventually critiqued the model acknowledging its limitations and the evolving nature of understanding complex systems and safety.

-

Reason’s critiques and reflections highlight the importance of continuous improvement in our safety (and quality) methods. Applying an evidence based approach, similar to the evidence based medicine movement, will help ensure that we are aligned with best practice in these fields.

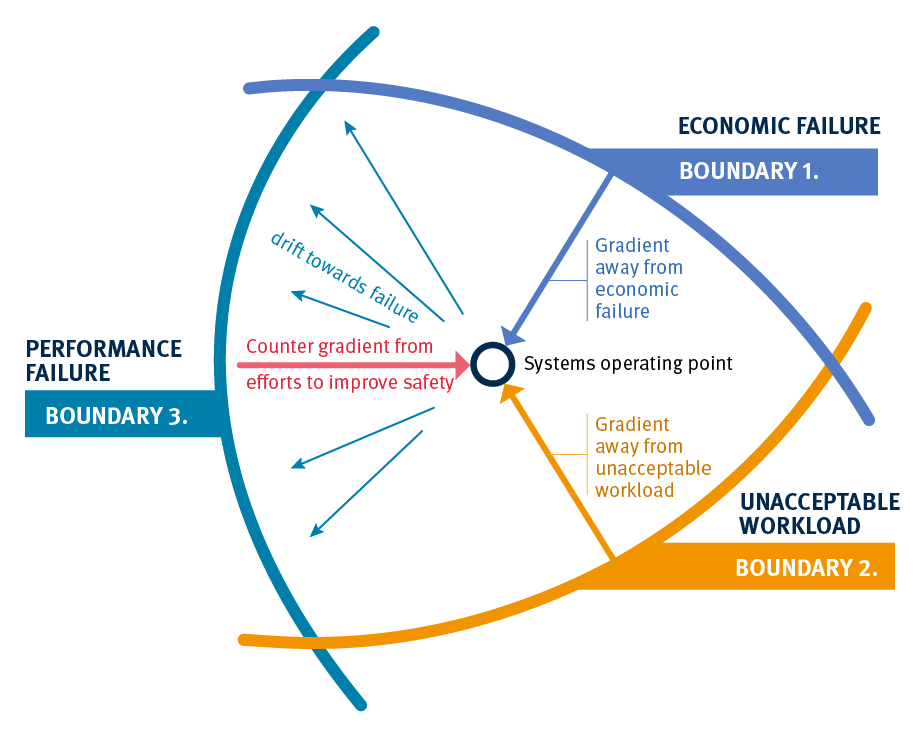

Dynamic Safety Model – 1980s

Key points:

-

Boundaries of performance:

Rasmussen described three key boundaries within which organisations must operate to avoid failure: -

Economic failure:

If costs become too high, the system fails economically. This pressure pushes the system to be more efficient and cheaper. -

Unacceptable workload:

If the workload is too high, the system fails because it can’t handle the work. This creates pressure to work faster, take shortcuts, or prioritize efficiency over thoroughness. -

Performance failure:

Crossing this boundary means an accident or error has occurred. It’s not always clear until after something goes wrong. This boundary also includes a “margin” where risks are seen as acceptable, but operating here can lead to accidents.

How organisations operate:

-

Balancing act:

Organisations constantly move within these boundaries, trying to avoid crossing them while staying efficient. -

Close to the edge:

To save money and reduce workload, organisations often operate close to the failure boundary. Over time, this can lead to what’s called the “normalisation of deviance,” where risky practices become the new normal. -

Drifting into danger:

If these risky practices continue without clear danger signals, the organisation can gradually drift into failure.

Impact on safety thinking:

-

Wider view on safety:

The Dynamic Safety Model gives a broader view of how things can go wrong in organisations. -

Safety is not static:

Safety needs continuous monitoring and adjustment, rather than a one-time fix. -

Ongoing risk management:

Rasmussen’s model encourages organisations to be proactive, regularly checking and adjusting their practices to avoid crossing into dangerous territory.

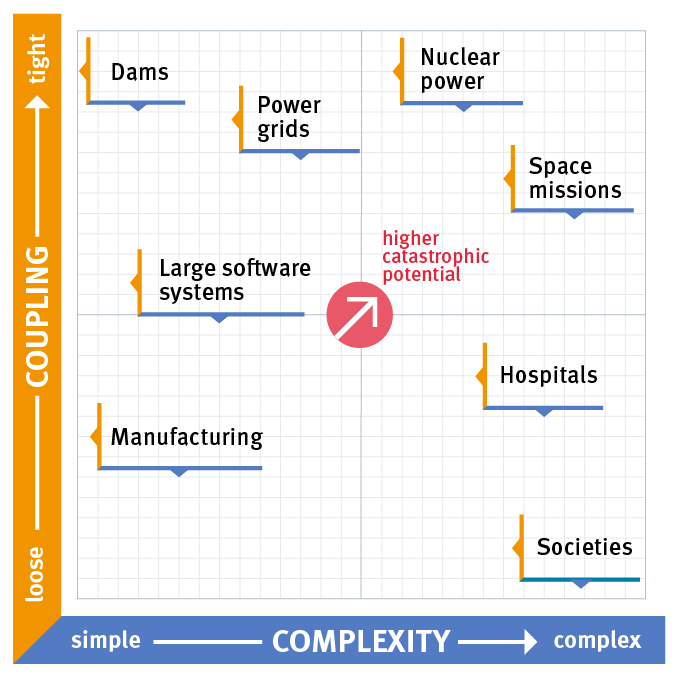

Perrow’s Normal Accident Theory

The Three Mile Island nuclear disaster showed how complex systems can fail in unexpected ways, challenging traditional safety views.

Key points:

-

Unexpected failure: The nuclear industry believed its technology was safe and reliable, but a mix of unexpected failures, including some in the safety systems, led to a serious accident.

-

Normal accidents: Charles Perrow, a Yale sociologist, introduced the idea that in very complex systems, accidents are inevitable. These are called “Normal Accidents” because they happen due to unpredictable interactions within the system.

-

Unpredictable chain reactions: Small issues can snowball into major disasters because complex systems don’t always behave in straightforward ways.

Important concepts in healthcare:

-

Interactive complexity:

Hospitals are full of complex interactions between different teams, departments, and technologies. These interactions can lead to unexpected problems. -

Tight coupling:

In healthcare, different processes are closely linked. A problem in one area, like a delay in medication, can quickly cause bigger issues elsewhere. -

Serious consequences:

Mistakes in healthcare can lead to severe harm, making it crucial to understand and manage these risks.

What Perrow suggested:

-

Redesigning systems:

Since organisations such as healthcare can’t be abandoned like other risky systems, it’s important to redesign it to minimize accidents, rather than just focusing on punishing mistakes or enforcing strict protocols.

Impact:

-

Accidents may be unavoidable:

In complex systems like healthcare, aiming for zero harm may not always be realistic. -

Simplify and separate:

Systems should be designed to be as simple as possible and less interconnected where it makes sense. -

Identify and manage risks:

Focus on finding and addressing system-wide risks, not just preventing individual mistakes.